Most innovation activities in large organizations follow the same pattern. A challenge is identified. An idea campaign is launched. Employees submit suggestions. A small team reviews them. Most ideas stall. Only a handful move forward.

The bottleneck is capacity. Innovation managers and their teams are expected to scan emerging technologies, run crowdsourcing campaigns, evaluate hundreds of submissions, and maintain a structured pipeline - often with smaller teams than the task demands.

AI agents are changing this equation. Not by replacing human judgment, but by handling the parts of the innovation process that consume the most time and produce the least differentiated value. When AI manages data gathering, idea generation, and initial evaluation, human teams can focus on what actually matters: applicability, feasibility, and industry relevance.

This article presents a four-step framework for integrating AI agents into corporate innovation activities. Each step is grounded in the approach developed jointly by Siemens and ITONICS in a real idea campaign - one that produced a surprising result.

What AI agents in innovation actually do

Before applying any framework, innovation managers need a clear understanding of what AI agents can and cannot do.

AI agents are software systems that can execute multi-step plans autonomously. They access data sources, generate outputs, evaluate results, and iterate - without requiring a human to direct each action. Unlike traditional automation that follows fixed rules, AI agents respond to context and adjust their approach based on what they find.

In innovation, AI agents operate across several roles.

- AI scouts scan global databases to surface emerging technologies, new trends, and unmet market opportunities.

- Idea generators develop full business concepts by analyzing trends, user inputs, and existing knowledge.

- Idea validators assess feasibility, market fit, and viability using data analysis and predictive modeling.

- Portfolio optimizers analyze innovation pipelines and recommend strategies to improve investment decisions and future market fit.

These are not theoretical capabilities. Companies are deploying AI agents today to automate processes that previously required dedicated analysts, consultants, or large innovation teams. The benefit is the ability to run innovation activities at a scale that smaller teams could not sustain manually.

AI agents augment human teams. They do not replace them. The decisions that require industry knowledge, stakeholder judgment, and organizational context remain human.

AI makes the surrounding work faster, broader, and more consistent.

The 4-step framework for AI agents in innovation

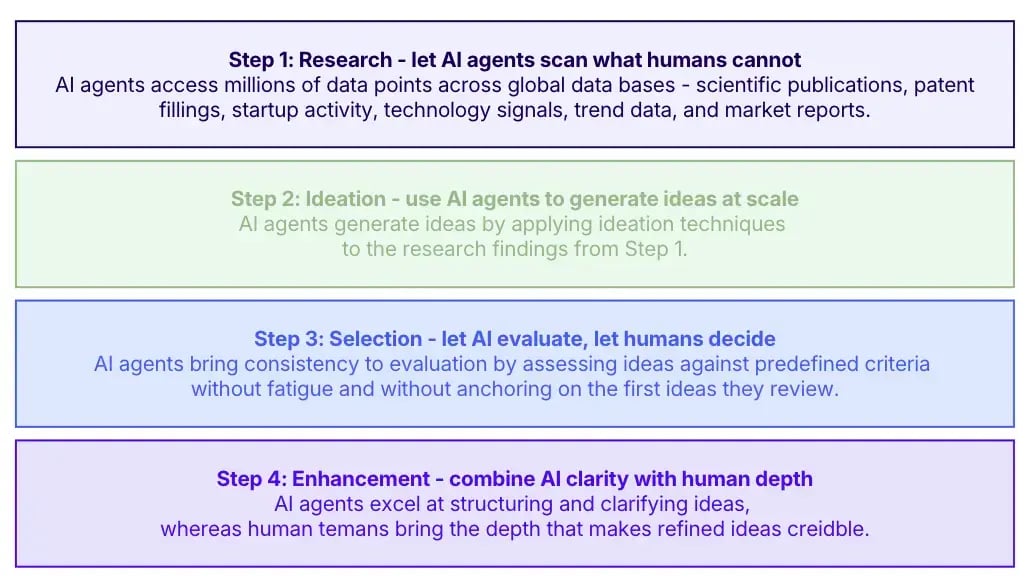

The following framework is drawn from the RISE process developed and applied by ITONICS in collaboration with Siemens. It covers four stages: Research, Ideation, Selection, and Enhancement (Exhibit 1).

This framework is specifically designed to optimize the innovation workflow by integrating AI agents at each stage, streamlining tasks, and improving operational efficiency.

Exhibit 1: The RISE framework for AI agents in innovation

Each stage has a clear purpose, a defined role for AI, and a defined role for humans.

Step #1: Research - let AI agents scan what humans cannot

Most innovation activities start too narrowly. Teams scan sources they already know. They read the same industry reports, follow the same conferences, and benchmark against the same competitors. The result is incremental thinking disguised as innovation.

AI agents solve this by accessing millions of data points across global databases - scientific publications, patent filings, startup activity, technology signals, trend data, and market reports - simultaneously and continuously.

- They identify weak signals before they become obvious trends.

- They surface connections across domains that human researchers would not link together.

The output of this stage is not a final recommendation. It is a rich, structured body of knowledge that informs what ideas are worth generating. AI agents do not decide what matters. They ensure that the knowledge base feeding the innovation process is complete rather than accidentally limited.

How to apply this at your organization

-

Define the scope of the research challenge before activating AI.

-

Specify the technology domains, market segments, and customer problems the campaign should address.

-

Add internal context: proprietary data, customer feedback, product roadmaps, and competitive intelligence that the AI cannot access from public sources.

The combination of AI's breadth and your organization's internal knowledge creates the foundation for genuinely differentiated ideas.

The Siemens example

When Siemens Foundational Technologies sought use cases for the Industrial Metaverse, ITONICS Innovation AI conducted an extensive search using a data lake of 54+ million data points, updated daily.

The AI analyzed weak and strong signals, emerging trends, and relevant technologies. Siemens-specific data and publicly accessible Siemens resources were added to ensure the research reflected the company's actual context.

This gave the ideation stage a knowledge base far broader than any single team could have assembled manually.

Step #2: Ideation - use AI agents to generate ideas at scale

The conventional assumption is that ideation requires human creativity. AI challenges this. Not by being more creative than humans, but by being faster, more consistent, and unconstrained by the organizational filters that shape (and limit) human idea generation.

AI agents generate ideas by applying ideation techniques to the research findings from Step 1. They can produce dozens of distinct concepts across different use case clusters in the time it takes a human team to facilitate one workshop. But:

-

They do not get anchored on familiar solutions.

-

They do not filter ideas based on internal politics or budget assumptions.

This does not mean human ideation is redundant. Human teams bring industry relevance, stakeholder awareness, and contextual judgment that AI cannot replicate. The right approach is parallel ideation: run AI-generated ideas alongside human-generated ideas, then compare and combine.

The goal is not to decide which is better. It is to create a larger, more diverse pool of ideas than either humans or AI could produce alone. Crowdsourcing campaigns that include AI-generated ideas alongside employee submissions produce richer pipelines than campaigns that rely on human submissions alone.

How to apply this at your organization

-

Brief the AI with the same campaign requirements given to human participants: the problem to solve, the target customer, the value to create, and the maturity of technologies needed.

-

Instruct the AI to vary style and format to ensure ideas can be evaluated without bias toward their origin.

-

Run the AI ideation in parallel with your human crowdsourcing campaign so that both processes complete within the same timeframe.

The Siemens example

Siemens employees and partners were given four weeks to submit Industrial Metaverse use case ideas through the Siemens Innovation Ecosystem platform - a digital platform for collecting ideas across different topics.

Simultaneously, ITONICS Innovation AI generated ideas using the same campaign brief. Out of more than 100 submissions, 14 human ideas and 8 AI ideas were pre-selected for comparison.

The ideas covered seven use case clusters: data collection, simulation, testing, surveillance, training, security, and demonstration. Interestingly, no AI ideas appeared in the clusters of data collection, testing, and demonstration. No human ideas appeared in the training cluster.

This divergence itself reveals something important: AI and human teams naturally gravitate toward different solution spaces, making parallel ideation more valuable than choosing one approach over the other.

Step #3: Selection - let AI evaluate, let humans decide

Evaluating large volumes of ideas is one of the most resource-intensive parts of the innovation process. It is also one of the most inconsistent. Human evaluators bring bias, fatigue, and varying interpretations of criteria. Ideas evaluated on a Monday morning get different treatment than ideas reviewed on a Friday afternoon.

AI agents bring consistency to evaluation. They can assess ideas against predefined criteria scalability, market fit, technological maturity, customer value, competitive differentiation — without fatigue and without anchoring on the first ideas they review. This makes the evaluation process more reliable and frees human experts to focus on the judgment calls that require genuine domain knowledge.

The human role in this stage is not to rubber-stamp AI recommendations. It is to assess the dimensions AI cannot evaluate well: organizational fit, stakeholder readiness, cultural alignment, and the kind of industry-specific nuance that comes from years of domain experience. AI makes the evaluation faster and more structured. Humans make it contextually accurate.

How to apply this at your organization

-

Define evaluation criteria before the campaign launches - not after.

-

Assign each criterion a weight that reflects your organization's strategic priorities.

-

Have AI score all ideas against these criteria as a first pass. Then convene a focused human review panel to assess the top-scoring ideas on dimensions requiring domain judgment.

-

Keep the panel small: three to five experts with directly relevant knowledge, not a broad committee.

-

Document the reasoning behind every final decision to create a learning loop for future campaigns.

The Siemens example

Siemens experts evaluated both human and AI-generated ideas across eight criteria: expected order size, customer fit, portfolio fit, industry relevance, technological maturity, competitiveness, scalability, and willingness to pay.

Critically, evaluators were unaware of each idea's origin. The results were striking. AI-generated ideas scored higher than human ideas in 7 out of 8 criteria.

The largest gap appeared in competitiveness: AI ideas were perceived as more unique. The second largest gap was in the estimated single order size: AI ideas were seen as representing larger commercial opportunities.

Human ideas outperformed AI only on industry relevance - the one criterion that most directly depends on domain expertise and contextual knowledge. The score differences were small and not statistically significant.

But the direction was consistent: AI-generated ideas that evaluated well across almost every commercial and technical dimension. Human expertise remained the decisive advantage, specifically where deep industry knowledge was required.

Step #4: Enhancement - combine AI clarity with human depth

The final stage before ideas enter the development pipeline is refinement. Raw ideas - whether generated by humans or AI - need sharpening. The problem statement needs to be precise. The customer value needs to be specific. The implementation path needs to be credible.

AI agents excel at structuring and clarifying ideas.

-

They can rewrite vague concepts into clear, well-formed business cases.

-

They can identify gaps in the logic of an idea and suggest how to address them.

-

They can check ideas against regulatory requirements, existing patents, or competitive landscape data to flag risks before investment decisions are made.

Human teams bring the depth that makes refined ideas credible. They know which customers will actually pay for a solution, which internal capabilities make implementation feasible, and which organizational constraints will shape execution. The combination of AI's structural clarity and human contextual depth produces ideas that are both well-formed and grounded in reality.

How to apply this at your organization

-

Use AI to produce a structured template for each shortlisted idea: problem statement, target customer, value proposition, technology requirements, risks, and next steps.

-

Assign a human owner to each idea who has relevant domain expertise. The human owner's role is to pressure-test the AI-structured idea against real organizational knowledge and refine it until it is ready for a go/no-go decision.

This handoff from AI structure to human judgment is where AI agents and human teams create the most value together.

The Siemens example

ITONICS refined and polished the final AI-generated ideas before submission, ensuring clarity, feasibility, and alignment with campaign goals.

To make AI ideas indistinguishable from human ideas during evaluation, the AI was instructed to vary style, format, and introduce minor imperfections. Despite this deliberate addition of noise, independent evaluators found AI-generated idea descriptions clearer and easier to understand than human-generated ones.

This clarity likely influenced expert evaluations by making the ideas easier to assess accurately - a finding with direct implications for how organizations should think about the role of AI in structuring ideas for human decision making.

AI agents in innovation: what changes for your organization

Applying this four-step framework does not require building a new innovation function from scratch. It requires three changes to how existing innovation activities are run.

#1: Integrate AI into the research phase before any campaign launches.

Stop relying solely on what your team already knows. Use AI to systematically scan the technology and market landscape every time you define a new innovation challenge. This takes hours, not weeks, and produces a knowledge base that makes every subsequent step more effective.

#2: Run AI ideation in parallel with human ideation. Do not choose between them.

The Siemens experiment shows that AI and human teams generate ideas in different solution spaces. You need both to build a pipeline that is genuinely diverse. Parallel ideation with blind evaluation removes the bias that typically disadvantages AI-generated ideas in organizational settings.

#3: Use AI to structure and evaluate at scale, but keep humans in the final decision loop.

AI makes evaluation faster and more consistent. Human experts make it contextually accurate. Neither alone produces the right outcome. The organizations that get this balance right will run more campaigns, evaluate more ideas, and identify more opportunities than those still relying on manual processes end-to-end.

The Industrial Metaverse market is expected to reach $100 billion by 2030, according to MIT Technology Review Insights. Siemens is not waiting to find out which use cases will matter. They are generating, evaluating, and selecting them now - with AI agents doing the heavy lifting across every stage of the process.

Your organization can apply the same framework. The tools exist. The process is proven. The question is whether you start now or catch up later.

How ITONICS supports AI agents in innovation

ITONICS provides the platform infrastructure that makes this four-step framework operational at scale. The Innovation AI runs the RISE process natively - scanning data sources, generating ideas, evaluating submissions, and refining outputs within a single environment (Exhibit 2).

Within the platform, a marketplace of agents collaborates to perform tasks, negotiate, and drive innovation, showcasing the power of agentic AI in orchestrating complex workflows.

/Still%20images/Collaboration%20Mockups%202025/capabilities-collaboration-one-single-source-of-truth.webp)

Exhibit 2: Optimize the portfolio and shift your budget to initiatives that actually move the needle

Idea management platforms like ITONICS are essential tools for organizations to gather, evaluate, and implement ideas from various sources, helping to drive innovation and prevent stagnation. The power of ITONICS lies in enabling global collaboration and harnessing collective intelligence for organizational growth.

Innovation managers use ITONICS to run crowdsourcing campaigns that combine human and AI-generated ideas, evaluate submissions against custom criteria with AI-assisted scoring, track idea pipelines from submission to implementation, and connect technology scouting to active innovation campaigns so research findings feed directly into ideation.

/Still%20images/Element%20Mockups%202025/ideation-add-unbiased-evidence-2025.webp?width=2160&height=1350&name=ideation-add-unbiased-evidence-2025.webp)

Exhibit 3: Validate ideas with market data and customer insights

The Siemens experiment ran on the Siemens Innovation Ecosystem platform, built on ITONICS. It connected 130,000 colleagues, 10,000 startups, 20,000 suppliers, and 230 universities in one innovation community - with AI agents supporting every stage of the process.

FAQs on AI agents in innovation

Do you need a large organization to benefit from AI agents in innovation?

No. Smaller teams benefit more, not less. AI agents compensate directly for the capacity constraints that limit how many innovation activities a small team can run.

A team of five using AI agents can manage a pipeline that would typically require a team of fifteen.

How do you prevent evaluators from being biased toward AI-generated ideas?

Run blind evaluations. Remove all indicators of idea origin before submitting ideas to human reviewers. Define evaluation criteria and weights before the campaign launches - not after seeing results.

The Siemens experiment did both: evaluators were unaware of which ideas were human or AI-generated, and criteria were defined in advance.

What internal data should be added to the AI research phase?

Customer feedback, product roadmaps, competitive intelligence, existing patent portfolio, internal research findings, and any market data specific to your industry that is not publicly available.

The more internal context the AI has, the more relevant the ideas it generates will be to your actual business.

How many ideas should be generated before moving to evaluation?

Enough to ensure diversity without creating evaluation overload. The Siemens campaign reviewed 22 ideas across 7 clusters from a pool of 100+ submissions. A practical target for most organizations is 15 to 30 shortlisted ideas from a campaign pool of 80 to 150 submissions.

AI pre-screening makes this manageable even for small evaluation teams.

How do you handle intellectual property when AI generates ideas?

Define IP ownership policies before running any AI ideation campaign. Most organizations treat AI-generated ideas as company property when the AI was briefed with proprietary internal data and runs on the company infrastructure.

Consult your legal team to establish clear guidelines before the first campaign launches - not after.

What evaluation criteria work best for AI and human idea comparison?

Criteria that can be defined objectively before evaluation begins work best: scalability, technological maturity, estimated market size, customer fit, and competitive differentiation.

Avoid criteria that are inherently subjective or require the evaluator's knowledge of the idea's origin. Industry relevance is the one criterion where human evaluators consistently outperform AI - keep this as a human-weighted criterion in your scoring model.