The F-35 took 20 years and $400 billion to develop. Anduril fields a combat-capable autonomous system in under 12 months. Helsing updates battlefield AI on a weekly cycle. These are not edge cases. They are the new baseline.

Defense innovation is shifting faster than most incumbents can track. Technology cycles are now led by the commercial sector. Geopolitical threats are no longer predictable. Battlefields are distributed, software-defined, and multi-domain. The traditional defense innovation model was not built for any of this.

The result: a widening gap between organizations still running waterfall procurement and new players who have redefined what speed and operational relevance look like. Successful innovation in defense today looks nothing like it did a decade ago.

This article breaks down why the old closed innovation model fails, what the new defense innovation model looks like, and 19 concrete lessons to operationalize it. The lessons are drawn from discussions with over 300 industry experts and real-world practices at Anduril, Palantir, Baykar, Helsing, and others.

From closed innovation to open innovation: why the shift is now a security imperative

For decades, defense organizations ran on what innovation management scholars call closed innovation.

- Companies generate, develop, and commercialize their own ideas internally.

- Research stays within the organization.

- External knowledge is treated as a risk, not a resource. Intellectual property is hoarded rather than leveraged.

Henry Chesbrough, writing for Harvard Business School Press, identified this model's fundamental flaw: it assumes an organization can control all the best ideas in its field. In fast-moving technology environments, that assumption breaks down. The most valuable new ideas increasingly emerge outside the organization's walls.

In defense, closed innovation created five structural failures that now threaten competitive advantage.

#1: The innovation process starts too late

Capability development historically began only after formal military requirements were issued - a process lasting five to ten years.

In Ukraine, commercial drones with modular payloads and continuous software updates have outpaced state-developed systems. Requirements issued today describe yesterday's battlefield.

#2: Hardware-centric thinking blocks progress

Legacy defense systems centered on custom hardware, with software added as an afterthought. Upgrades required full overhauls.

Proprietary architectures blocked third-party integration. Meanwhile, autonomous systems like Baykar's TB2 gain competitive advantage not from airframes but from real-time software adaptability.

#3: The innovation ecosystem stays closed

Defense innovation long remained within primes and certified subcontractors, excluding startups and academia. This blocked access to external ideas and slowed access to frontier technology. AI advances today emerge from commercial labs, not defense contractors. Large companies that ignore this reality fall behind firms that do not.

#4: Development happens in silos

Capability development occurred in functional isolation across departments. Today's defense requires interoperability, seamless data sharing, and coalition operations.

Siloed development produces systems that cannot communicate, coordinate, or integrate - a direct failure of innovation management at the organizational level.

#5: Investment prioritization misaligns with mission urgency

R&D and innovation budgets followed long-term budget cycles driven by contract size, not frontline need. Speed of relevance matters more than sunk cost.

DIU and AFWERX have started to fix this through milestone-based investment - but most procurement organizations have not made the shift.

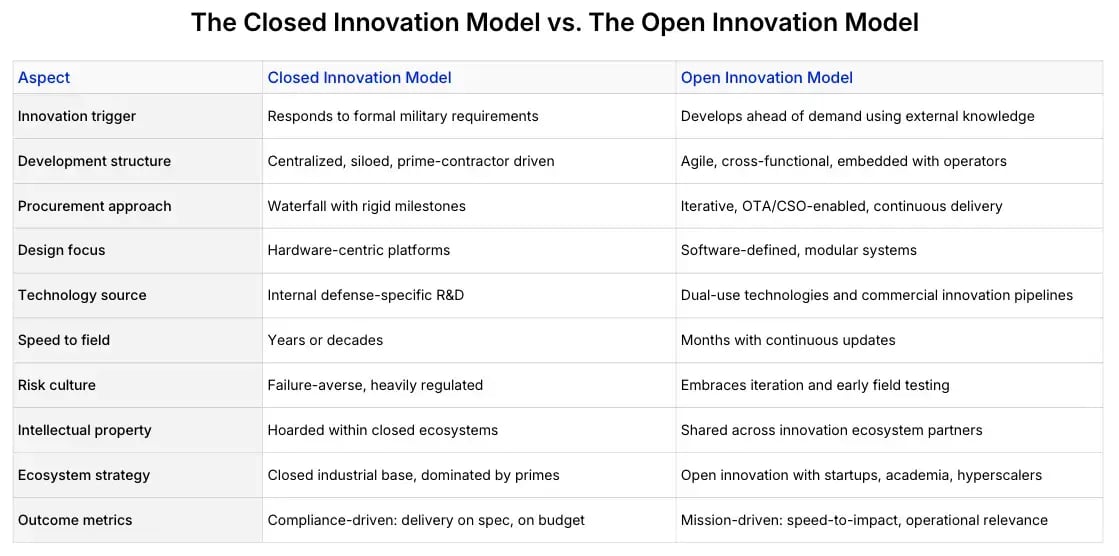

The table below captures the full contrast between the closed innovation model that defined defense for decades and the open, agile model that successful innovation now demands (Exhibit 1).

The old model is not simply inefficient. It is strategically misaligned. In defense, closed innovation is no longer a conservative choice. It is a liability.

What successful innovation looks like in the new defense innovation model

A new breed of defense company has emerged: one that plays by different rules. These firms are not waiting for requirements to be issued. They develop ahead of demand, build modular systems designed for integration, and operate with a software-first mentality. Their innovation process is continuous, not sequential.

Companies like Anduril, Helsing, Palantir, Shield AI, and Baykar are redefining what successful innovation means in a defense context. They demonstrate that external paths to capability - through commercial development, dual-use technology, and embedded field engineering - consistently outperform internal and external paths that remain locked inside traditional procurement cycles.

Anduril's Lattice OS - a real-time battlefield software platform - was developed well before any DoD solicitation. It began as a commercial effort to fuse sensor data into a single operational picture and is now used across multiple U.S. services. Anduril has also constructed one of the largest autonomous drone production facilities in the United States, producing loitering munitions and AI-enabled surveillance systems at startup speed and operational scale.

Helsing's AI platform processes battlefield sensor data in real time for targeting, friend-or-foe detection, and fire control. Its tools have been updated in the field to adjust for changing threat conditions - sometimes on a weekly cycle. This is technological innovation deployed as a living system, not a delivered product.

The common thread across these companies: open innovation ecosystems, external knowledge actively absorbed, and development cycles measured in months. These are the new norms. Organizations that do not adopt them will find themselves competing in a new market they no longer understand.

19 lessons to operationalize defense innovation

Operationalizing the new defense innovation model requires a fundamental shift in innovation management across four domains: Culture & Mindset, Product & Technology, Collaboration & Ecosystem, and Strategy, Governance & Processes.

Each domain addresses a specific set of challenges. Together, they form the implementation roadmap for organizations ready to move from closed innovation to open, mission-aligned defense innovation. Below are the highest-leverage lessons from each domain.

Culture & mindset: building an environment for successful innovation

The shift here is from centralized, rule-bound bureaucracy to decentralized teams with experimentation mandates, mission autonomy, and a tolerance for the creative risk that innovation requires. Creativity and problem-solving are essential for fostering a culture of innovation, enabling organizations to adapt and thrive in rapidly changing environments.

Culture is not a soft problem. It is the foundation that determines whether all other implementation efforts succeed or fail.

Lesson 1: Build ahead of demand

Stop waiting for formal requirements to begin capability development. Identify emerging threats and prototype solutions based on anticipated mission scenarios. Anduril built Lattice OS before the DoD asked for it. Baykar exported a combat-proven drone before most acquisition programs recognized the category.

Strategy and R&D leaders who act on anticipated needs compress time to operational relevance from years to months. This shift - from reactive to anticipatory - is the single most important culture change in the new innovation process. Business innovation requires organizational strategies and leadership approaches that foster a culture of innovative thinking, empowering teams to drive change within established companies.

Lesson 2: Embed engineers with users

Place developers alongside operators during design and testing. This is how Himera developed its tactical radio units - in direct collaboration with Ukrainian frontline operators, evolving through active high-intensity conflict deployment. Darkhive co-develops small autonomous ISR systems with special operations teams and iterates on real mission feedback.

Embedding generates knowledge that requirements documents never capture. It creates a direct feedback loop between problem and solution, between employees who build and the people who rely on what they build. Creating an environment that encourages creativity and collaboration among employees is crucial for effective innovation management, as it allows for a steady flow of new ideas.

Lesson 3: Tolerate iteration and failure

Create an environment where small failures are accepted as part of learning cycles, not punished. Organizations that penalize failed experiments select against the behavior that produces breakthroughs.

Test-learn-adapt loops outperform plan-then-execute cycles in any domain where conditions change faster than programs.

Lesson 4: Stand up internal accelerators

Establish protected units that can explore and prototype outside traditional acquisition constraints. Large companies and defense ministries need internal structures that operate at startup speed - separated from legacy governance, staffed by people with authority to make decisions and run experiments.

The remaining Culture & Mindset lesson covers training acquisition personnel to work with nontraditional vendors. All five lessons are detailed in the full report.

Product & technology: from waterfall R&D to software-defined systems

The shift here is from platform-dominant, hardware-first development to agile, continuously updated systems built with fieldable minimum viable products. Technological innovation in defense must now move at the pace of software, not hardware programs.

Lesson 6: Build modular, agile architectures

Shift from bespoke, closed designs to open, swappable hardware and software modules that enable integration without full system overhauls. Saab's Ground-Launched Small Diameter Bomb was fielded in under 15 months by combining existing munitions and guidance systems into a new long-range capability.

Modular design compressed what was once a multi-year development cycle into one that matched operational urgency.

Lesson 7: Make everything software-defined

Move from hardware-fixed performance to software-upgradable systems where features and improvements deploy iteratively. Shield AI's Hivemind software enables autonomous flight in GPS-denied environments - capability delivered by updating software, not redesigning airframes. Features that previously required hardware replacement now deploy in the field.

Lesson 10: Push live updates based on battlefield feedback

Software updates informed by in-theater telemetry allow systems to evolve after deployment. Helsing updates its AI tools based on live operational data - sometimes weekly. This turns deployed systems into continuously improving assets rather than static deliverables frozen at the point of fielding.

The full Product & Technology domain covers six lessons, including AI-native design, digital twins, operational simulation, and flexible production capacities. All six are in the report.

Collaboration & innovation ecosystem: from closed primes to open innovation networks

The shift here is from gated, prime-dominated ecosystems to blended public-private networks where external ideas flow freely, dual-use technology gets absorbed systematically, and the innovation ecosystem includes startups, academia, and commercial technology firms alongside traditional primes.

This is the domain where closed innovation has done the most damage.

- External knowledge existed.

- Dual-use technology was available.

- Other firms had developed solutions.

But the closed model kept all of it out. The new model inverts this logic entirely.

Lesson 13: Forge hybrid partnerships

Pair traditional scale advantages with agile contributors. Rheinmetall's collaboration with Anduril combines manned-unmanned vehicle platforms with autonomy software - delivering teaming solutions faster than either organization could achieve alone.

These partnerships represent a structural break from legacy vendor lock-in. Rather than attempting to control every component, large companies integrate external innovation to accelerate timelines and improve adaptability.

Lesson 14: Enable open innovation channels

Use challenge formats, SBIRs, and pitch events to source new ideas from unexpected places and accelerate validation. Italy's GCAP Acceleration Initiative issued over 40 targeted technology exploration calls across AI, autonomous systems, advanced materials, and digital engineering.

These calls were structured to map directly onto capability development goals. The result: a repeatable process for moving external ideas from concept to integration.

The Collaboration & Ecosystem domain also covers streamlined security access and federated data architectures. Both lessons are in the full report.

Strategy, governance & processes: from compliance to mission outcomes

The shift here is from slow, milestone-driven acquisition and delivery cycles to continuous feedback, dynamic investment prioritization, and contracts measured by operational performance in the field. This domain is where innovation management meets organizational governance - and where the gap between intent and implementation is widest at most defense organizations.

Lesson 17: Quantify MVP goals

Define short-term impact targets before starting any development effort. Fielding 5 to 10 deployable systems in 24 to 36 months is a concrete goal. It creates urgency, enables resource allocation decisions, and generates real user feedback far faster than multi-year development programs.

Vague goals protect no one. They delay accountability and allow innovation initiatives to drift without producing new products or demonstrable progress.

Lesson 18: Prioritize investment through mission fit and user traction

Move from contract-chasing investment models toward dynamic prioritization of internal R&D spending based on frontline needs, emerging threat vectors, and prototype traction.

DIU and AFWERX both implement milestone-based investment to operationalize this shift. The question changes from "what has a contract?" to "what is proving relevance at the field level?" Several factors drive this prioritization: threat urgency, degree of existing prototype traction, integration complexity, and alignment with strategic capability goals.

Lesson 19: Adopt outcome-based contracting

Move away from process compliance toward contracts measured by milestones, deliverables, and performance in the field. OTA and CSO mechanisms exist precisely for this purpose and represent an important course correction for defense acquisition.

Rebellion Defense has received contracts through DIU and other rapid acquisition channels, with delivery cycles aligned to user need rather than budget-year planning. When contracts measure outcomes rather than compliance, the entire innovation process reorients around impact.

How ITONICS helps defense organizations implement the new model

Knowing the 19 lessons is the first step. Making them operational across a large defense organization - with all the governance, security, and coordination challenges that entail - is the harder problem. Most organizations have the ideas. What they lack is the infrastructure to implement them at scale.

ITONICS provides the digital backbone for the full defense innovation process - from technology scouting and external idea intake to portfolio governance and project execution. The platform enables defense organizations to manage and track innovation projects from initial idea generation through to commercialization, ensuring structured efforts turn innovative ideas into tangible outcomes.

GDELS-Mowag uses it to run a stage-gated innovation funnel from idea collection to proof of concept, with every step tracked in one shared environment.

TNO and the Dutch Ministry of Defense use it to run their Innovation Radar, drawing inputs from over 40 experts and giving more than 600 users across the defense ecosystem shared visibility into emerging technology priorities.

Four benefits make the difference in practice:

- Shared foresight. Teams monitor technologies and threats collaboratively, giving leaders evidence to invest before formal requirements are issued.

- Repeatable scouting. Evaluating dual-use startups and external ideas becomes a structured, scalable process - not a one-off exercise.

- Transparent governance. Live dashboards let innovation managers track impact, ownership, and investment priorities in one place.

- Coordinated roadmaps. Engineers, operators, and acquisition leads work from the same data. Silos become shared plans.

The result: defense organizations move from scattered innovation efforts to structured, mission-aligned execution at speed.

The window to act is narrowing

The lessons in this article are drawn from real-world shifts already underway - at startups, alliances, and reforming incumbents. Open innovation is the new imperative for defense organizations, requiring them to integrate external ideas and collaborative approaches alongside internal efforts. The organizations moving fastest are not waiting for policy mandates or budget cycles. They are building the new model now.

For defense innovation leaders at primes, ministries, and acquisition organizations, the question is no longer whether to transform. It is whether to lead or to respond. As an example, the case studies in this article illustrate how organizations like HSBC and ASML have successfully adopted open innovation and design thinking to drive transformation.

Download the full report to access all 19 lessons, five implementation case studies, and the Innovation Needs Matrix that maps each lesson by urgency, effort, and potential impact.

FAQs on the new defense innovation model

Where should a large defense prime start with this transformation?

Start with the two lessons that require the least time to implement and generate the most immediate signal: embed engineers with users (Lesson 2) and enable open innovation channels (Lesson 14). Both can begin within 90 days without restructuring procurement.

Run a 60-day pilot with one operational unit and one external tech call. Use the results to build the internal business case for deeper transformation across the other domains.

How do you apply outcome-based contracting when most internal processes still require milestone compliance?

Use OTA and CSO mechanisms as the vehicle for new programs specifically. You do not need to restructure existing contracts - you need a parallel acquisition path for innovation-focused programs.

Define 3 to 5 measurable field performance criteria before contract award. Tie payment tranches to demonstrated outcomes in the field, not document deliverables on a desk.

How do we build ahead of demand without formal requirements to anchor development?

Use structured foresight instead of requirements as your trigger. Map emerging threats and technology signals to anticipated mission gaps using a shared radar or assessment framework. Set a 24-month horizon. Identify 3 to 5 capability gaps that do not yet have formal requirements but have a high probability of becoming critical.

Allocate a protected R&D budget - even 5% of annual program spend - to prototype against those gaps before competitors or adversaries force the issue.

What does it take to set up an internal accelerator inside a large defense organization?

Three conditions are non-negotiable:

-

dedicated budget not subject to normal reallocation,

-

decision-making authority that does not route through standard program offices, and

-

a mandate to operate outside existing acquisition timelines.

A team of 8 to 12 people with a 12- to 18-month charter to field one minimum viable capability is a viable starting point.

AFWERX and Lockheed Martin's Skunk Works provide reference points for structure and governance.

How do you evaluate dual-use startups for defense relevance?

Use a structured scoring framework across six dimensions: technology readiness level, mission customer readiness, commercial funding readiness, integration complexity, security clearance pathway, and time to prototype.

Score each startup 1 to 9 per dimension. MIT's Dual-Use Readiness Levels framework - developed in collaboration with defense and commercial innovation researchers - provides a validated methodology.

Aim to complete initial scoring in under two hours per startup with a team of three evaluators.

Does the new defense innovation model apply to hardware-intensive programs, or only software and autonomy?

It applies to both, but the implementation differs.

For hardware-intensive programs, the highest-leverage shifts are modular architecture design (Lesson 6), digital twin validation before physical build (Lesson 11), and flexible production systems (Lesson 8).

Saab fielded a new long-range munition capability in under 15 months by combining existing hardware with new guidance integration. That is a hardware program delivered at startup speed - through modular design principles, not a fundamental change in what defense companies build.