Future-prepared organizations achieve 33% higher profitability and 200% higher market capitalization growth than their peers, according to research from Rohrbeck and Kum. That is not a statistic. It is the distance between setting the terms of competition and scrambling to respond.

Most companies are not future-prepared. They have horizon scans, trend reports, innovation trackers, and environmental scanning tools. What they lack is a program: the governance, defined roles, and decision-integration architecture that converts intelligence into action.

Ask yourself how many competitive intelligence findings from the last 12 months directly changed a resource allocation decision. For most organizations, the honest answer is few or none. Not because the intelligence was poor, but because the program was not built to connect it to decisions.

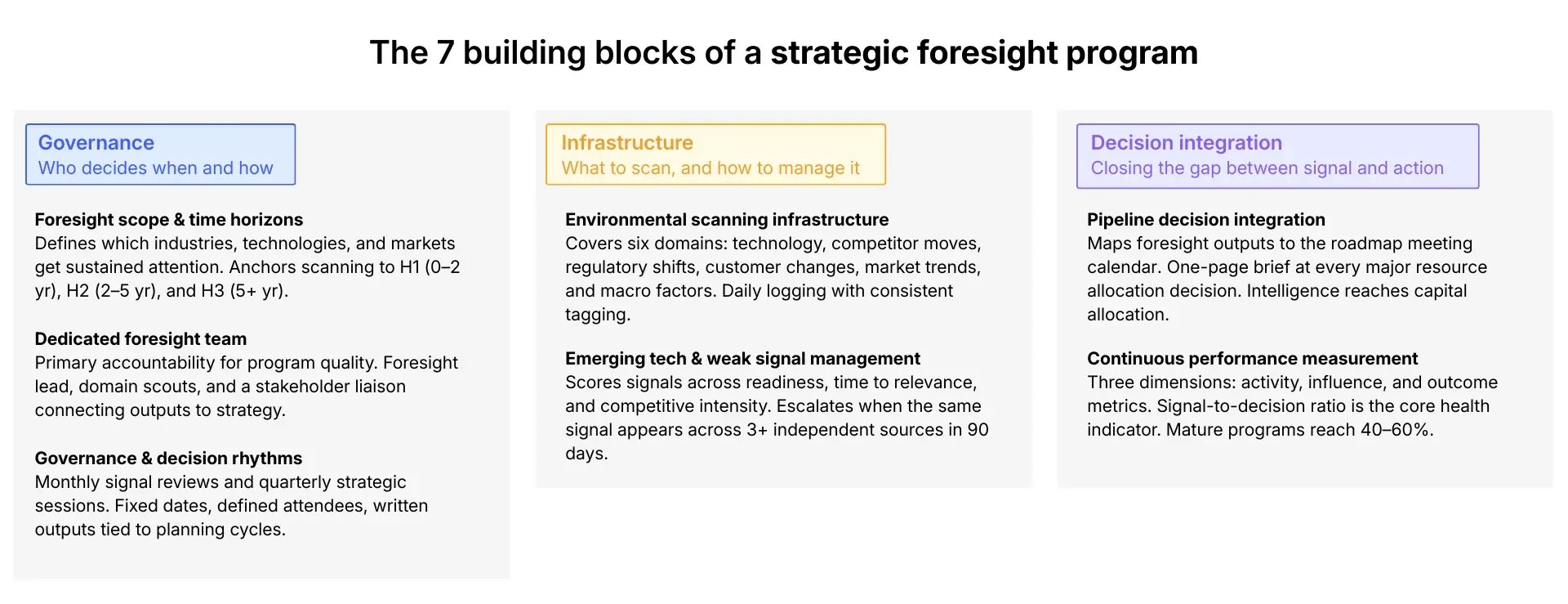

This article provides that blueprint. You will find the seven building blocks of a modern strategic foresight program, a staged approach to advancing foresight maturity, and a model for connecting competitive intelligence to portfolio decisions across markets and business units.

Exhibit 1: The 7 building blocks of a strategic foresight program

The goal is a program where emerging technologies, competitor moves, and shifting industry dynamics inform innovation strategy before they become disruptive.

But first: why is this so hard to get right?

The new scouting paradox: more signals, less foresight

The volume of market intelligence available to organizations has never been greater. AI-powered monitoring, real-time data feeds, and always-on environmental scanning platforms tracking emerging technologies, regulatory shifts, and competitor activity have made it faster and broader than ever.

Yet strategic decisions are becoming harder to make well. The challenges are not technical. They are structural.

Why more signals produce fewer decisions

AI tools now monitor thousands of sources simultaneously: patent databases, academic publications, startup funding, regulatory filings, trade shows, and social platforms. Organizations that once relied on quarterly market research now receive continuous streams of information from competitors, industry shifts, regulatory changes, and technology developments.

Exhibit 2: ITONICS alert showing an increase in interest increase in trend rise of autonomous networks

Greater information volume does not produce better strategic decisions. When signals exceed a team's capacity to evaluate them, the instinct is to collect more data rather than decide faster. Decision-making suffers not from a shortage of input but from an absence of structure.

Popular approaches like ad hoc scanning and periodic focus groups were designed for a slower competitive rhythm. Research from our 2026 Top Trends in Corporate Innovation report shows that establishing three to five strategic objectives before environmental scanning begins eliminates 60 to 70% of irrelevant signals immediately. Most organizations skip this step. Signals accumulate. Decisions do not.

The 2025 AlixPartners Disruption Index found that 57% of senior executives report their company was highly disrupted in the past year. EY research found the share of executives surprised by political risk events jumped from 1% in 2021 to 35% by 2025. The risks of operating without a structured foresight process compound as industry conditions shift and customers change priorities. More signals did not make them more prepared. A lack of program structure is what left them exposed.

How AI reshapes the foresight equation

The foresight equation is simple: signal detection plus analytical capacity plus decision speed equals competitive advantage.

Get all three right, and you act on intelligence before competitors do. Get anyone wrong, and the intelligence you collect never becomes action.

AI reshapes all three variables simultaneously. It expands detection across thousands of sources, compresses analysis by surfacing cross-source patterns that would take weeks to identify manually, and accelerates decision-making. Real-time analytics deliver a 29% improvement in decision speed, according to 2025 research by Hydrogen BI.

But here is the problem most organizations run into. AI strengthens all three variables at the signal level. It does nothing to fix the structural failures that stop intelligence from reaching decisions. A BCG survey of 500 organizations in Harvard Business Review in January 2026 found that companies with more advanced foresight capabilities report a sustained performance advantage.

The differentiator is not the tools. It is the program.

Exhibit 3: The traditional foresight equation

Each variable in the equation maps to a structural failure.

-

Detection breaks down when there is no governance to define what to scan for.

-

Analytical capacity breaks down when ownership is diffuse, and no one is accountable for turning signals into insight.

-

Decision speed breaks down when intelligence sits adjacent to the business rather than inside it.

These are not technology problems. They are program design problems, and they show up in three predictable patterns.

Three structural gaps that keep foresight from reaching decisions

Most foresight programs fail not because of poor methods or insufficient data, but because of three structural gaps. Each one corresponds to a variable in the foresight equation. Each requires a specific fix.

Weak governance. Without defined decision rights, escalation pathways, or review rhythms, intelligence is produced and distributed but never formally integrated into strategic planning cycles.

The result is a program that informs conversations without changing decisions: a research function mistaken for a strategy function. The fix is formal governance with defined cadences, fixed attendees, written outputs, and documented connections between competitive intelligence findings and resource allocation moments.

Diffuse ownership. Foresight is assigned as a secondary responsibility to analysts or innovation managers already at capacity. Organizational culture reinforces this pattern when innovation is treated as peripheral rather than central to business strategy. It is consistently the first activity cut when quarterly pressures mount. The fix is dedicated headcount with primary accountability for program quality and continuity.

Poor integration. Market intelligence activities sit adjacent to the business rather than inside it. Capital allocation decisions are made in parallel, and the two processes rarely intersect. The risks are significant: Rohrbeck and Kum found that organizations with deficient future preparedness face a performance discount of 37 to 108% relative to industry peers.

The fix is structural: foresight outputs mapped to the portfolio review calendar, with a formal intelligence briefing at every major resource allocation decision point, ensuring competitor moves, market shifts, and changing customer priorities affect pipeline decisions when it matters.

These three gaps share a common root: a program assembled rather than deliberately designed. The seven building blocks that follow define what deliberate design requires, and what each gap is specifically built to fix.

The seven building blocks of a strategic foresight program

The building blocks answer one question: what does a complete foresight program require as permanent capabilities? They are not a sequence but an architecture. All must be present for the program to function as a continuous intelligence and innovation management system rather than a collection of disconnected activities.

Building block 1: Foresight scope and time horizons

Scope is the program's first and most consequential design decision. It defines which industries, technologies, competitors, markets, and regulatory domains receive sustained analytical attention.

Exhibit 4: Structure of a trend radar including radar segments, size, color, and distance

Without a clear definition, environmental scanning drifts toward whatever is topical rather than strategically relevant, one of the core issues affecting strategic planning quality across organizations of every size.

Scope is structured across three time horizons.

- H1 covers zero to two years, focused on near-term competitor moves, market share dynamics, and shifts affecting current strategies and customer priorities.

- H2 covers two to five years, focused on emerging technologies and structural industry changes that require environmental scanning, scenario development, and strategy adjustment.

- H3 covers five or more years, focused on disruptive technologies and long-range possibilities that shape future market positioning and innovation opportunities.

The right balance across horizons differs by company, industry velocity, and the specific risk factors most relevant to the business, and should be reviewed annually. A trend radar helps to implement the scope definition.

According to our 2026 Top Trends in Corporate Innovation research, organizations connecting real-time competitive intelligence with portfolio decisions are up to 40% more confident in investment decisions. Scope definition is the prerequisite that makes that connection possible.

Scope defines what to watch. The next building block defines who does the watching.

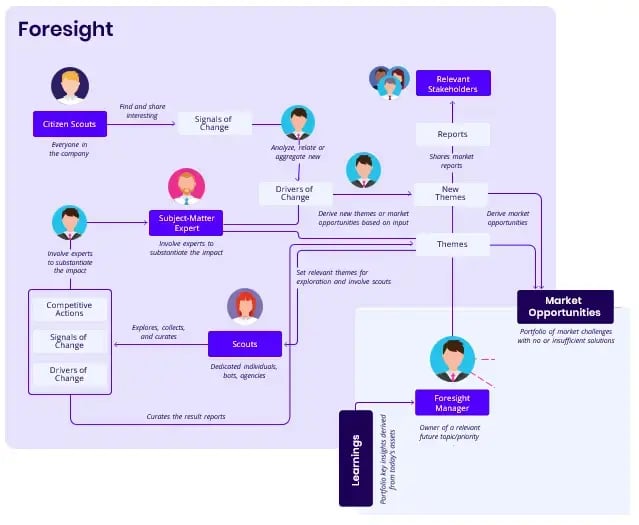

Building block 2: A dedicated team of competitive intelligence professionals

Foresight requires people whose primary accountability is program quality and continuity. Assigned as a secondary responsibility, it is deprioritized under operational pressure. A minimum viable team includes a foresight lead, two to three competitive intelligence professionals covering assigned domains, and a stakeholder liaison connecting outputs to business strategy.

Exhibit 5: The foresight universe and its team members

The skills required, working with ambiguous data, translating intelligence into decision-relevant insights, constructing implications about future competitor moves, must be developed deliberately. These are not skills most organizations recruit for directly. The ability to synthesize weak signals into actionable insight is one of the most valuable and underdeveloped aspects of foresight practice.

Different companies structure this capability differently depending on existing innovation management functions, but the core roles remain consistent.

-

In larger organizations, domain scouts embedded within business units extend coverage without scaling central headcount.

-

Some organizations supplement internal teams with external services and advisory networks, particularly when creating coverage in new markets or industries.

Even the best team produces intelligence that stalls without formal foresight governance to route it to decisions.

Building block 3: Governance and decision rhythms

A foresight program without governance is a research function. Intelligence circulates and conversations improve, but strategic decisions remain unaffected. Two rhythms define a functional governance model.

-

A monthly foresight review covers escalated signals, portfolio implications, and scanning priority adjustments.

-

A quarterly strategic session connects program outputs to planning cycles, reviewing scenario updates and producing documented recommendations for resource reallocation.

Both require fixed dates, defined attendees, and written outputs.

Without these rhythms, competitive intelligence findings circulate informally and lose their connection to specific decisions. Innovation management teams that establish formal governance early consistently report higher signal-to-decision ratios within six months.

One of the clearest benefits of structured governance is that it focuses organizational effort on the insights that matter rather than letting intelligence accumulate in reports no one acts on.

Governance sets the rhythm. Environmental scanning provides the raw material it needs to function.

Building block 4: Environmental scanning infrastructure

Scanning infrastructure is the systematic process for collecting competitive intelligence across the full external environment. Most organizations have some form of environmental scanning in place. The problem is coverage and connection: scanning that lacks defined domains produces an undifferentiated stream of signals, and scanning that lacks governance connections produces intelligence that never reaches a decision.

A complete infrastructure covers six domains:

-

technology developments and emerging technologies;

-

new technology adoption by competitors;

-

competitor intelligence across target and adjacent markets;

-

regulatory shifts;

-

customer and market changes;

-

and macroeconomic and geopolitical factors.

A written policy on ethical concerns around data sourcing should be established before scanning begins.

Team members collect data daily, logging signals and creating consistent records with tagging across domain, time horizon, confidence level, and strategic impact. All the information gathered feeds into a shared repository, making intelligence visible across teams.

Exhibit 6: Auto-generate PowerPoint presentations from collected trends

The output ranges from tactical intelligence on near-term competitor moves to strategic intelligence on long-range market shifts, with market intelligence on customer and industry trends sitting between the two. Industry experts and advisory networks provide on-the-ground knowledge of how specific markets, customers, and competitors are developing now and in the future.

Standard scanning captures what is already visible. The harder challenge is managing what is only just emerging.

Building block 5: Emerging technologies and weak signal management

Emerging technologies and weak signals require a distinct management process from standard competitive intelligence work. Organizations consistently wait for a new technology to gain broad recognition before committing analytical attention.

By the time it is obviously relevant, competitors who moved earlier have built capability advantages that take years to close. The difference between tactical intelligence and strategic intelligence is often just timing: the same signal, caught earlier, becomes advantage rather than a catch-up problem.

A structured evaluation framework scores each signal across five dimensions:

-

Technology Readiness Level,

-

time to commercial relevance,

-

impact on current business models,

-

alignment with existing capabilities,

-

and competitive intensity among early movers.

For more detail, technologies exceeding a defined threshold trigger a cross-functional deep-dive within 30 days. This time-bound response converts the framework from a scoring exercise into a decision-making tool.

Weak signals, early fragmented indicators not yet coherent enough to constitute trends, are tracked in a dedicated register and escalated when the same signal appears across three or more independent sources within 90 days. Success depends on having clear evaluation methods and scoring techniques before signals start arriving.

/Still%20images/AI%20Mockups%202025/capabilities-workflows-your-context-your-results.webp?width=2160&height=1350&name=capabilities-workflows-your-context-your-results.webp)

Exhibit 7: Context-aware AI to scout signals, trends and technologies

Without them, teams either under-react to disruptive technologies or over-invest in new ideas with limited relevance to core markets. At the other end of the spectrum, organizations that master this process reduce the risks of strategic surprise and gain the option to move first.

Identifying the right signals is necessary. But it only creates value when those signals reach the decisions that matter.

Building block 6: Roadmap decision integration

The connection between competitive intelligence and product portfolio decisions is where most foresight programs fail. Intelligence is collected, analyzed, and distributed while capital allocation decisions run in parallel.

The two processes rarely intersect. Organizations acting on real-time market intelligence report 30% higher revenue growth than those relying on periodic analysis, according to the Competitive Intelligence Alliance.

Closing the gap requires a structural mechanism. Foresight outputs map directly to the product portfolio governance calendar, with a formal briefing at every significant resource allocation decision.

Each major finding generates a one-page brief answering three questions:

-

what does this competitive intelligence reveal about the competitive environment,

-

which portfolio initiatives does it affect,

-

and what action does it recommend.

This shifts the foresight team's output from trend reports to strategic decisions. It also generates interest from strategic portfolio managers who would otherwise remain disengaged. When intelligence clearly affects their decisions, the effort to sustain adoption drops.

Creating this discipline early, connecting it to real innovation and resource allocation choices, determines whether foresight becomes embedded in how the company competes, or remains a function that competitors can ignore.

Decision integration creates the value. Measurement makes that value visible and keeps the program accountable.

Exhibit 8: Projects, owners and dependencies shown on one roadmap

Building block 7: Continuous performance measurement

Measurement is the accountability structure that keeps all building blocks connected to strategic outcomes. A program tracking only activity metrics cannot demonstrate whether it is influencing the decisions that justify its existence. Without it, foresight budgets are the first cut when organizational priorities shift.

Three dimensions are required.

-

Activity metrics confirm the program is operating: signals logged, sources monitored, briefs produced by competitive intelligence professionals.

-

Influence metrics track whether intelligence is reaching decisions: portfolio decisions with documented foresight input, and resource reallocations triggered by competitive intelligence activities.

-

Outcome metrics assess organizational performance every six months, examining factors such as early warnings that preceded market shifts and innovation results across business units with and without active competitive benchmarking.

Together, these three dimensions give leadership a system for developing an evidence-based view of program value, one that focuses attention on the insights that drive decisions.

The signal-to-decision ratio, what share of escalated signals are traceable to a documented decision within 90 days, is the single most useful program health indicator. Early-stage programs typically sit below 10%. Mature programs reach 40 to 60%.

Knowing what to build is one thing. Building it in the right order is another.

From foundation to institutionalization: building your program in stages

The building blocks define what a complete foresight program requires. The stages define how to construct those capabilities: in what sequence, at what pace, and with what markers confirming readiness to advance.

Stage 1: Foundation — establishing the program's core

Objective: Create the conditions for purposeful environmental scanning. The goal is defined scope, accountable ownership, and minimum viable infrastructure, not immediate intelligence output.

Entry condition: Senior strategic planning stakeholders have committed time and organizational support. Without this, the foundation stage produces documents without authority to act on them.

The work: Run a two-hour workshop to identify three to five strategic questions anchoring scope, domain priorities, and time horizons. Assign roles formally: foresight lead, domain responsibilities for each intelligence professional, and governance participants. Informal assignments do not survive the first competing priority.

In weeks four through six, build minimum viable infrastructure: a shared signal repository, a tagging taxonomy, a scanning schedule, and a brief template.

Exit condition: The first intelligence brief has been produced and discussed in a formal session. The program is scanning, not planning to scan.

With the infrastructure in place, the next challenge is making intelligence reach the decisions that matter.

Stage 2: Integration — connecting intelligence to decisions

Objective: Transform the program from an intelligence producer into a decision input. This stage ensures competitive intelligence formally reaches the resource allocation moments where it creates value.

Entry condition: Foundation stage complete. The team is scanning consistently, signals are tagged reliably, and at least one governance review has taken place.

The work: Map the product portfolio governance calendar for the next 12 months and insert a competitive intelligence briefing at every major resource allocation decision point. Introduce the one-page brief as the standard output for every escalated signal. Formally integrate market research and competitive intelligence through shared repositories and joint review sessions, so that all information gathered feeds into a single strategic planning process.

For teams developing this integration for the first time, focus groups with portfolio managers can surface resistance early and improve brief relevance before formal governance rhythms are established.

Exit condition: Foresight is a documented input at a minimum of two portfolio decision points. Portfolio managers are referencing briefs in decision conversations, not simply receiving them.

Once intelligence is reaching decisions consistently, the final challenge is making that happen regardless of who is in the room.

Stage 3: Institutionalization — embedding foresight into operations

Objective: Make foresight structurally independent of the individuals who built it. The program should sustain itself through leadership changes, embedded in planning templates, governance frameworks, and onboarding processes.

Entry condition: Stage 2 complete. The signal-to-decision ratio is being tracked and portfolio managers are actively engaging with intelligence briefs.

The work: Embed foresight assumptions into strategic planning templates as required inputs. Document all scanning routines, tagging conventions, governance rhythms, and brief formats so new team members reach full productivity within 60 days. Expand analytical depth by making scenario planning, technology assessment, cross-impact analysis, and competitive benchmarking standard practice.

Exit condition: Portfolio managers are requesting specific briefs before upcoming decisions rather than waiting to receive them. The program sustains itself through the loss of a key team member.

A program that reaches Stage 3 looks and behaves very differently from one still stuck at Stage 1. Here is what that difference looks like in practice.

What a mature strategic foresight program looks like in practice

AI has raised the stakes on both sides of the foresight equation. Signals are growing faster than most programs can process, and the window between detection and decision is compressing. Maturity shows up as influence, not sophistication. The question is not whether the program is producing intelligence, but whether that intelligence is changing what the company invests in before competitors act.

When foresight drives resource allocation and investment decisions

The clearest indicator of maturity is foresight's visible effect on capital allocation. Product and business managers cite competitive intelligence findings when justifying investments across markets.

BCG's Future Preparedness Index found that companies using continuous foresight frameworks achieved 35% higher investment effectiveness and twice the speed of portfolio reprioritization during market shifts.

Our work with 500+ organizations shows that those embedding foresight in their innovation lifecycle move 30 to 50% faster from opportunity identification to resource allocation, and the performance gap compounds over time.

Dolby Laboratories is a useful example of what this looks like in practice. The company created a dedicated Futures Council, a cross-functional body of 30 stakeholders connecting foresight outputs directly to business planning. Intelligence on emerging trends, competitors, and developing markets feeds into a single platform visible to senior leadership, alongside every decision made in response. It is a working example of building block 3 (governance and decision rhythms) and building block 6 (portfolio decision integration) operating in concert at scale.

Reaching that level of integration requires infrastructure that manual processes cannot sustain.

From spreadsheets to systems: why scale demands dedicated software

Early-stage programs can operate on spreadsheets. At scale, three specific failures emerge:

- Siloed intelligence. Intelligence gathered in one part of the organization becomes invisible to others, creating blind spots in decisions that should have been informed by existing signals.

- Untraceable decisions. The link between signals and portfolio decisions becomes untraceable, making it impossible to demonstrate program ROI.

- Fragile continuity. Onboarding new team members requires tacit knowledge transfer rather than documented processes, creating continuity risks as teams evolve.

Each failure carries a direct strategic cost. A business unit developing a new product may be unaware that a colleague has already gathered competitor intelligence relevant to their target markets. Capital allocation decisions get made without the intelligence that could have changed them.

Dedicated foresight software resolves all three. Prioritize a centralized signal repository, traceable links between intelligence findings and portfolio decisions, consistent tagging taxonomies across all scanning domains, and an audit trail that compounds in value as historical data accumulates. The key question when evaluating platforms is whether the software closes the structural gap between intelligence collection and decision-making, or simply digitizes the same disconnected process.

Building foresight that actually changes decisions

The organizations that act on the future before competitors do are not better at collecting signals. They are better at converting signals into decisions. In an AI-driven world, the detection variable in the foresight equation is no longer the constraint. AI handles that.

Exhibit : A trend radar is shared with R&D and IT to highlight the updated industry landscape

The constraint is analytical capacity, decision speed, and the program architecture that connects intelligence to the moments where strategy is made. The organizations pulling ahead are fixing the structural gaps that AI cannot touch.

The seven building blocks address all three variables. The three stages sequence how to build them. The question is not whether your organization needs a foresight program for an AI-driven world. It is whether the one you have is built for it.

Managing environmental scanning, competitive intelligence, technology assessment, and portfolio integration at scale requires dedicated infrastructure to support that goal. ITONICS provides that infrastructure.

FAQs about building a strategic foresight program

What is a strategic foresight program?

A strategic foresight program is a structured system for converting competitive intelligence into business decisions. It combines environmental scanning, governance rhythms, and portfolio integration so that signals about competitors, emerging technologies, and market shifts reach the people making resource allocation decisions. Before those decisions are made.

Unlike ad hoc horizon scanning or periodic trend reports, a mature foresight program runs continuously. Intelligence feeds governance on a rolling basis. Governance feeds decisions when they are needed, not when the next planning cycle happens to fall.

How is strategic foresight different from competitive intelligence?

Competitive intelligence is the raw material. Strategic foresight is the program that turns it into decisions.

Competitive intelligence covers the collection and analysis of signals: competitor moves, emerging technologies, regulatory shifts, market changes. Strategic foresight is the broader discipline that governs what to scan for, who is accountable for interpreting it, and how findings reach portfolio decisions and capital allocation conversations.

Most organizations have competitive intelligence activities. Fewer have a foresight program that connects those activities to strategic outcomes. That gap is where most of the value is lost.

What are the biggest challenges in building a foresight program?

The most common challenges are structural, not technical. They fall into three patterns: weak governance, where intelligence is produced but never formally integrated into planning cycles; diffuse ownership, where foresight is a secondary responsibility and gets deprioritized under pressure; and poor integration, where competitive intelligence runs parallel to capital allocation decisions without ever intersecting.

These gaps share a common root: a program assembled rather than deliberately designed. Adding more scanning tools or bigger datasets does not fix them. Each gap requires a specific structural fix: dedicated roles, formal governance rhythms, and a mechanism that connects intelligence outputs to actual decision points.

How do you measure the success of a foresight program?

Foresight programs require three measurement dimensions. Activity metrics track whether the program is operating: signals logged, sources monitored, and briefs produced. Influence metrics track whether intelligence is actually reaching decisions: portfolio decisions with documented foresight input, and resource reallocations triggered by competitive intelligence findings.

Outcome metrics assess organizational performance every six months, such as early warnings that preceded market shifts, innovation results across business units with and without active competitive benchmarking.

The single most useful indicator is the signal-to-decision ratio: what share of escalated signals led to a documented decision within 90 days. Early-stage programs sit below 10%. Mature programs reach 40 to 60%.

How does AI change strategic foresight?

AI expands signal detection, compresses analysis, and accelerates decision speed. These are the three variables that determine whether a foresight program creates a competitive advantage. What took weeks to surface manually can now be identified in hours across thousands of sources.

But AI does not fix the structural failures that stop intelligence from reaching decisions. It cannot create governance where none exists, assign accountability to diffuse ownership, or integrate intelligence into capital allocation processes that run in parallel.

Organizations that deploy AI tools without restructuring their foresight program get more signals entering a broken process. The result is more noise, not more clarity. The program architecture has to come first.

/Still%20images/Element%20Mockups%202025/portfolio-receive-risk-alerts-2025.webp?width=2160&height=1350&name=portfolio-receive-risk-alerts-2025.webp)

/Still%20images/Explorer%20Mockups%202025/foresight-report-as-you-like-2025.webp?width=2160&height=1350&name=foresight-report-as-you-like-2025.webp)

/Still%20images/Roadmap%20Mockups%202025/portfolio-link-projects-teams-and-milestones-2025.webp?width=2160&height=1350&name=portfolio-link-projects-teams-and-milestones-2025.webp)

/Still%20images/Radar%20Mockups%202025/foresight-keep-everything-on-your-radar.webp?width=2160&height=1350&name=foresight-keep-everything-on-your-radar.webp)