Most governance of new product portfolios breaks between review cycles, not during them. A project loses strategic relevance in February. The governance board meets in April. Three months of R&D budget have funded a direction nobody will defend in the room.

This is R&D slack: a structural gap between when a portfolio problem becomes visible and when portfolio management is designed to catch it.

In industrial companies, that gap is expensive. Product development cycles run 18 to 36 months. A single misaligned project can consume 8 to 15% of the annual R&D budget before a formal review surfaces the problem. Across a portfolio of 20 to 40 active projects, the cumulative drag on portfolio performance compounds quickly.

This approach makes bottlenecks recognizable before the next review, contrasting internal team opinions with external market signals to sharpen informed decisions at every governance checkpoint.

That shift — from periodic portfolio governance to continuous, signal-based portfolio management — is what this article covers.

| AI governance shift | Slack reduced | What it is | How it works |

| Signal-based portfolio planning | Review-cycle | Continuous signal monitoring replacing batch information cycles | Patent filings, regulatory updates, and customer signals feed governance in real time. Decisions happen when conditions change — not on a fixed calendar. |

| AI-monitored project health scoring | Capacity | Continuous health scoring across four dimensions per active project | Strategic fit, milestone efficiency, market opportunity, and risk update continuously. A score drop triggers a governance alert before the next scheduled review. |

| Scenario modeling for resource allocation | Capacity | Dynamic allocation scenarios replacing static annual resource plans | AI pre-models alternative resource plans across the portfolio. When constraints appear, governance boards evaluate ready-made scenarios rather than building analysis under pressure. |

| Automated gate criteria monitoring | Review-cycle | Continuous gate readiness tracking replacing point-in-time manual reviews | Milestone rates, budget adherence, and compliance status score continuously. Governance boards enter gate meetings with live data instead of week-old prepared reports. |

| Portfolio-wide pattern recognition | Opinion | Aggregate analysis revealing systemic patterns invisible in individual project reviews | AI detects technology concentration, market concentration, and risk appetite drift across all active projects. Strategic misalignment surfaces before it appears in project outcomes. |

Exhibit 1: Five ways AI eliminates R&D slack in portfolio governance

Three types of slack slowing down product portfolio governance

R&D slack accumulates through governance gaps, not through individual bad decisions or resource constraints.

Product development teams make reasonable choices within a system that gives them incomplete, outdated information. Three distinct types of slack drive this problem in the new product development process.

Review-cycle slack: governance that only happens quarterly

Most portfolio governance frameworks run on quarterly or semi-annual review cycles. That cadence reflected how data collection worked before digital systems. Reporting took weeks. Aggregating project status required manual coordination across business units.

Many companies have only digitalized this complete process of reactive, cumbersome reporting.

Yet, markets move faster than review committees meet. A competitor launches an adjacent product. A technology becomes commoditized. A regulatory change shifts compliance requirements for an active project. These events happen on their own schedule.

When portfolio management only happens on a fixed calendar, product development allocates resources on conditions that no longer reflect reality. Research projects that warrant reallocation stay funded at their original resource levels. The governance structure itself generates the delay.

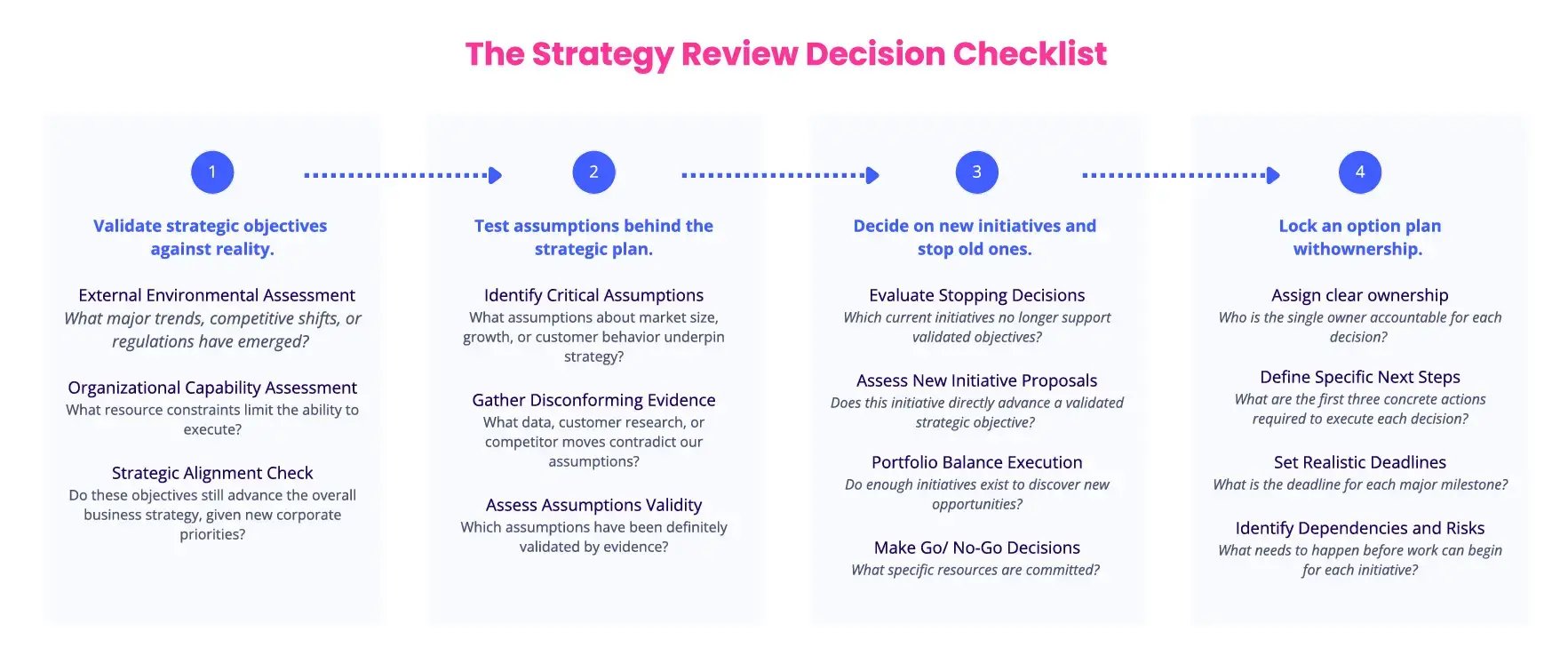

Exhibit 2: The strategy review decision checklist

Opinion slack: internal consensus masking weak signals

Most governance committees draw from internal domain experts. These experts carry deep product knowledge and long institutional memory. They also carry bias toward projects they championed and roadmaps they helped design.

This is opinion slack: internal consensus unchecked by external signals.

A project can score well in every internal portfolio review and still target a market that has fundamentally shifted. Competitor venturing activities reveal where the industry is moving. Customer feedback shows which capabilities are gaining adoption. Business analysis of external signals in adjacent markets predicts upcoming compliance requirements.

Portfolio governance that relies on internal expert ratings alone misses these inputs. Strategic decisions made without external signal data reflect what the team believes, not what the market confirms. The gap between those two things determines portfolio value over time.

Capacity slack: projects kept alive without active resource logic

Capacity slack occurs when projects remain active without considering changes in an organization's strategic objectives.

Stopping a project requires a formal decision. Continuing a project requires nothing. New product development process and portfolio management structures that make stopping harder than starting, accumulate projects over time.

In industrial R&D, the fully-loaded cost of one active engineering project typically runs between €500K and €2M per year. A portfolio carrying five strategically weak projects absorbs €2.5M to €10M annually in capacity slack. That budget cannot be reallocated to projects with a stronger strategic direction or better alignment with current strategic objectives.

This is a risk management failure as much as a governance one. Portfolio risks compound when weak projects consume resources that should fund the innovation pipeline — including the innovative products the organization needs to stay competitive.

Exhibit 3: The product development strategy process

Why these three compound into a strategic portfolio drift

These three types of slack amplify each other's effects:

- Review-cycle slack means governance decisions lag real-world conditions.

- Opinion slack means those decisions rely on incomplete data.

- Capacity slack means projects continue until a formal stop decision overrides the default.

Portfolio drift is the cumulative outcome. Misspending is the economic consequence. Unsatisfied expectations are the emotional burden.

A product development portfolio gradually diverges from organizational strategy, with no single decision causing the problem. By the time the divergence surfaces in a governance review, the portfolio has been misaligned for months.

Exhibit 4: The cost breakdown of delayed visibility in $50M portfolios (and the 8 AI SPM use cases)

How AI changes the product portfolio governance model

AI addresses all three by delivering continuous strategic alignment between portfolio investment and market conditions at the speed at which a decision is demanded.

AI changes portfolio governance at the mechanism level. It reshapes the governance processes and governance mechanisms that determine when decisions get made, on what information, and by whom.

The five shifts below each target a specific failure pattern.

1. Signal-based portfolio planning replaces periodic reviews

Traditional portfolio governance operates on information that is scattered across spreadsheets and presentations. It arrives in batches: quarterly project status reports, semi-annual roadmap reviews, and annual budget planning cycles.

Signal-based portfolio management operates on continuous information feeds:

- external events like patent databases, scientific publications, competitor product launches, regulatory filings, market adoption data, and customer feedback signals.

- internal events like no action for two weeks, missed deadlines, and unassigned owners.

The difference determines how quickly a governance board can act on changing conditions and unseen internal frictions.

When product development teams receive continuous and tailored market trends and frontline data, governance decisions happen closer to the moment conditions change. A technology signal that would take three months to surface in a quarterly review appears in the portfolio management workflow within days.

This compresses the decision lag that generates review-cycle slack. Portfolio management activities shift from reactive correction to proactive adjustment. The governance mechanisms move from a periodic checkpoint to a continuous monitoring process aligned with current market demands.

Signal-based governance also covers the full pipeline — from idea generation through project execution and commercial launch. Early signals affect not just active projects but also which ideas enter the portfolio governance management plan in the first place.

Exhibit 5: ITONICS AI assistant flags off-strategy projects

What external and internal alert signals change in portfolio decisions

- Patent filing velocity in adjacent technology areas indicates where competitors are investing R&D resources. A governance board monitoring these market trends can adjust portfolio composition before a competitive gap emerges.

- Regulatory signal tracking gives product development teams advance warning of compliance requirements. Adjusting project scope six months ahead of a regulatory deadline costs a fraction of an emergency redesign during late-stage development.

- Customer feedback from market-facing teams, when integrated into portfolio governance, reveals which product capabilities generate adoption and which generate friction. Resource decisions grounded in this data allocate capacity toward validated customer value rather than internal assumptions — improving continuous strategic alignment between portfolio investment and market reality.

2. AI-monitored project health surfaces kill decisions earlier

Every portfolio carries projects that have weakened in strategic rationale since they were approved. The challenge is identifying them before the next scheduled review cycle.

AI-monitored project health scoring evaluates each project continuously against a defined set of governance criteria: strategic alignment with current strategic goals, resource consumption relative to projected milestones, market conditions affecting the target opportunity, and technical risk factors.

When a project's health score drops below a threshold, portfolio managers receive an alert before the governance committee meeting. The kill decision moves from a quarterly agenda item to an immediate portfolio management action.

What health scoring looks like in practice

A practical health scoring model for portfolio governance tracks four dimensions per project.

-

Strategic fit scores measure alignment with current business strategy and strategic priorities. These update when organizational strategy shifts, not only when projects are reviewed on schedule.

-

Milestone efficiency scores track actual resource consumption against planned milestones. Projects consistently consuming more than 120% of planned resources signal project execution problems that compound across the timeline. Key performance indicators for project execution — on-time rate, budget adherence, scope stability — feed directly into this dimension.

-

Market opportunity scores assess whether the target opportunity has grown, shrunk, or shifted since project approval. A project approved for a €400M market opportunity looks different if that market has consolidated to €180M. This score draws on risk assessment of market dynamics alongside internal project data.

-

Risk scores aggregate technical risk indicators, dependency risks, and portfolio level risks into a single governance metric. Portfolio managers see risk concentration across the portfolio, enabling risk management decisions at the aggregate level — not only within individual projects.

The kill decision problem in traditional governance

Kill decisions are the hardest decisions governance committees face.

They require overriding past commitments, managing team expectations, and accepting a loss on sunk investment. Committees under social pressure default to continuation.

AI-scored health criteria reframe the kill decision. Portfolio managers present data rather than opinions. Governance bodies make informed decisions on scored evidence rather than contested interpretations. The governance process becomes easier to defend to executive leadership and senior management when the data trail is explicit and auditable by all relevant stakeholders.

3. Scenario modeling stress-tests resource allocation in real time

Static resource allocation plans assume the portfolio will develop as planned. In industrial product development, that assumption fails regularly.

A supplier delays a key component. A technology partner pivot changes a dependency. A competitor launches early and compresses the market window. Each event requires product development teams to reallocate resources — often under time pressure and without full visibility of portfolio-wide implications.

Without scenario modeling, these reallocation decisions are made on incomplete information. Teams shift resources based on immediate urgency rather than portfolio-wide strategic logic.

Static vs. dynamic resource allocation

Static resource allocation plans lock resource commitments at the start of a planning cycle. Reallocation requires manual analysis, stakeholder negotiation, and governance approval — a process that takes weeks in most industrial companies.

Exhibit 6: Analyzing automatically the strategic project portfolio health inside ITONICS

Dynamic resource allocation, supported by AI scenario modeling, generates alternative allocation plans continuously. When a constraint appears, governance committees evaluate pre-modeled scenarios rather than building new analysis from scratch. Portfolio balancing becomes an ongoing process rather than a crisis response.

This compresses reallocation cycle time from weeks to days. Product development teams maintain strategic alignment across the portfolio without waiting for the next formal governance cycle to allocate resources.

How scenario modeling exposes hidden bottlenecks

When two high-priority projects share a specialized cross functional engineering team, static planning treats each project's resource plan independently. Scenario modeling shows the conflict explicitly: both projects cannot maintain their milestones simultaneously.

Governance committees acting on this visibility can resolve conflicts proactively — adjusting timelines, staggering resource requirements, or making a priority decision between projects before the conflict creates a milestone failure. Project portfolio management at this level requires this kind of portfolio-wide visibility.

4. Automated gate criteria qualifications remove governance bottlenecks

Stage-gate processes define the governance checkpoints that products must pass through during development. Each gate requires evidence that a project meets criteria before advancing to the next phase.

In traditional portfolio governance, gate qualification depends on manual evidence collection and human review. Project managers compile reports. The governance committee schedules reviews. Projects wait in queue.

Automated gate criteria monitoring changes this sequence and streamlines the process across the full development pipeline.

What manual gate reviews miss

Manual gate reviews evaluate project status at a point in time — using information prepared days or weeks earlier by project managers under time pressure.

Automated gate monitoring evaluates project status continuously. Product development teams see which projects are tracking toward gate qualification and which are drifting from criteria — before the gate meeting occurs.

This gives governance boards decision-relevant information earlier. Projects clearly tracking toward qualification advance efficiently. Projects drifting from criteria receive governance attention before the gate, when course correction is still inexpensive and expected benefits from the project are still achievable.

Exhibit 7: Custom workflow builder to manage stages and gates

Criteria that AI monitors continuously

Gate criteria suitable for automated monitoring include: milestone completion rates, technical readiness assessments, budget adherence scores, market validation evidence, regulatory compliance documentation status, and cross-functional team readiness indicators.

Each criterion generates a continuous score rather than a binary pass/fail at review time. The governance mechanisms applied at each gate become transparent and consistent. Governance committees enter gate meetings with complete, current information aligned with strategic priorities. Progress tracking becomes real-time rather than retrospective.

Program management teams use these continuous gate scores to identify where the process is creating bottlenecks — and where automation can remove them without reducing governance quality.

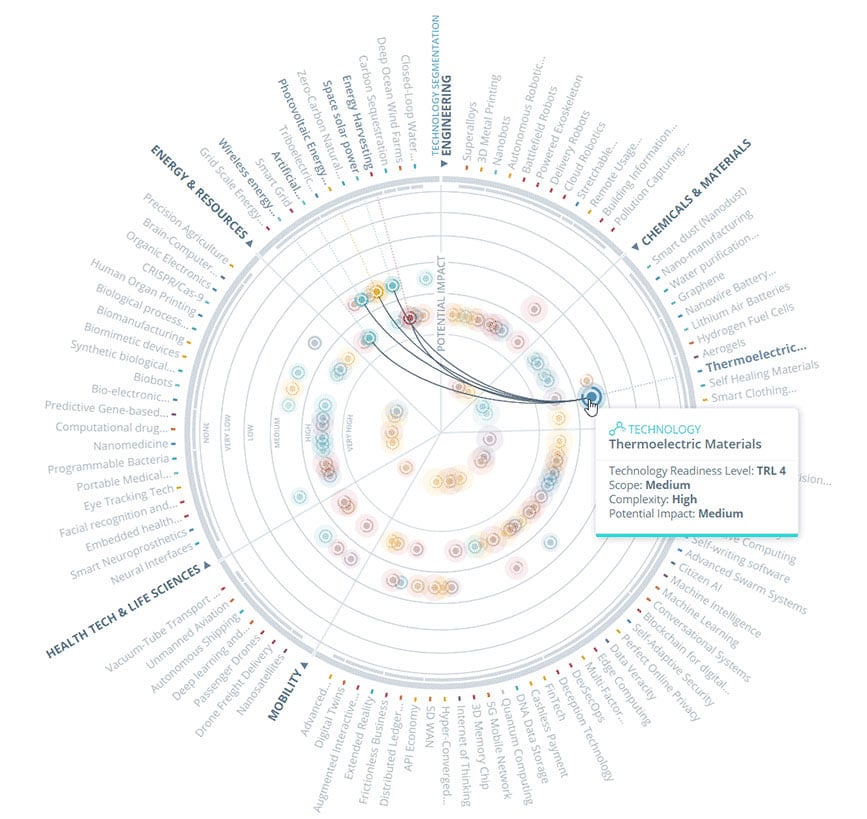

5. Portfolio-wide pattern recognition catches strategic drift

Individual project reviews assess projects in isolation. Portfolio-wide pattern recognition evaluates the entire portfolio as a system — surfacing insights that no individual project review can produce.

AI identifies patterns across project portfolios that isolated reviews miss. Technology concentration: too many projects depending on the same underlying platform. Market concentration: too many projects targeting the same customer segment. Resource concentration: too many development projects competing for the same specialized cross-functional capabilities.

What strategic drift looks like in product portfolios

Strategic drift accumulates when the portfolio mix diverges from organizational strategy without any single decision causing the divergence.

A governance framework that approves each project against individual business objectives can still produce a portfolio that collectively underserves the organization's strategic direction. The portfolio may be optimizing for existing product extensions while the market moves toward new technology platforms — in conflict with stated strategic goals. Strategic thinking at the portfolio level is required to catch this — and it requires data, not just intuition.

AI-enabled portfolio-wide analysis compares the portfolio mix against strategic objectives at the aggregate level. Portfolio managers see where the portfolio is overweight relative to strategy and where gaps are forming. Governance committees can make portfolio adjustments that correct aggregate drift — and identify where innovative products are underrepresented in the pipeline.

Early signals AI detects before governance boards do

Pattern recognition in portfolio governance identifies leading indicators of strategic drift before it becomes visible in project outcomes.

When the portfolio consistently approves incremental improvements to existing products while rejecting more exploratory projects, AI flags the risk appetite imbalance against stated strategic goals.

Governance bodies receive these aggregate pattern insights at the portfolio level. Portfolio managers address structural issues in portfolio balance before they appear as missed market opportunities.

What good AI-enabled governance looks like in new product development

AI-enabled governance produces three observable outcomes in mature R&D organizations.

Three markers of a mature governance structure

Decision velocity

The first marker is decision velocity. Mature governance structures make investment decisions faster because they operate on current data. When portfolio managers receive continuous signals, leadership teams schedule decisions around conditions, not calendars.

Kill decisions, resource allocation decisions, and gate advancements happen in days. Decisions align with current strategic priorities rather than with stale plans from the last review cycle.

Decision traceability

The second marker is decision traceability. Every investment decision links to specific data inputs: the health score that triggered a kill review, the market signal that prompted a resource reallocation, the pattern analysis that identified a strategic gap.

This makes decisions documentable. Executive leadership and governance bodies can audit decisions against the data that informed them — satisfying governance authority requirements in regulated industrial environments.

Exhibit 8: Notification for approaching validation deadline

Continuous portfolio performance tracking

The third marker is portfolio performance tracking. Continuous portfolio performance measurement Mature governance structures measure portfolio performance continuously: incremental versus transformational projects, near-term versus long-term investments, high-certainty versus exploratory bets.

AI-enabled governance makes the current balance visible and compares it against strategic goals continuously.

The internal vs. external data balance

The central challenge in product portfolio governance is calibrating the weight given to internal expert judgment versus external market data.

Internal expertise is irreplaceable for technical feasibility assessment, project execution risk evaluation, and cross-functional team readiness. Product development teams with deep domain knowledge make better project-level decisions than models operating without that organizational context.

External signals govern whether what is achievable is worth achieving, given current market trends and market demands. Mature portfolio management integrates both to ensure strategic alignment is maintained and that the portfolio delivers strategic benefits across the full development cycle.

The portfolio manager's role in AI-enabled governance

AI-enabled governance transforms portfolio management from a reporting function to a continuous steering function.

In traditional governance structures, the portfolio management office spends significant time collecting project status information, consolidating reports, and preparing governance board materials. This work produces information snapshots — accurate at the moment of preparation, outdated by the time the committee reviews them.

When AI handles continuous monitoring, portfolio managers focus on interpreting signals and facilitating governance decisions. The portfolio management office becomes a strategic function. Analytical thinking, not report production, defines the work.

Continuous strategic alignment becomes achievable when the portfolio management office operates on live data rather than periodic snapshots — enabling continuous strategic alignment at every decision point rather than only at quarterly reviews.

Achieving strategic objectives through portfolio governance

Strong portfolio governance creates the conditions to achieve strategic objectives systematically — not through individual project successes but through deliberate portfolio composition.

A portfolio governance management plan defines in advance what triggers governance decisions, what criteria determine project health, and how the governance board uses key metrics to evaluate progress across the portfolio. Organizations that operate with this level of governance structure consistently outperform those that rely on ad hoc portfolio review processes.

The strategic benefits of this approach compound over time. Portfolio value increases as weaker projects exit early. Strategic value compounds as resources flow to higher-potential initiatives. Strategic value and portfolio value both increase as weaker projects are identified earlier and resources are reallocated to higher-potential initiatives.

Organizational goals shift from project delivery toward strategic outcomes — which is where portfolio governance creates its lasting competitive advantage.

How Lear reshaped R&D portfolio management with data

Lear Corporation — a global automotive technology leader with approximately 165,000 employees across 39 countries — faced a portfolio governance problem common to large industrial companies: the signal volume required to make informed decisions exceeded what any team could process manually.

Exhibit 9: Lear technology radar

The signal volume problem at scale

Lear Innovation Ventures (LIV) operates across automotive seating, E-Systems, and emerging mobility technologies. Portfolio management at this scale requires monitoring market trends, startup activity, regulatory developments, and competitor moves across multiple product domains and business units simultaneously.

Manual signal processing created two structural constraints. Coverage was incomplete — the team could only monitor signals within human bandwidth. Processing was slow — signals that warranted portfolio governance attention took time to surface before reaching the governance board.

The portfolio management structure — and its governance framework — needed to handle signal volume at machine scale, then route relevant signals to human decision-makers for evaluation.

Moving from expert opinion to structured evaluation

Using the ITONICS platform, Lear built a systematic approach to signal monitoring. The team scanned over 1 million weak signals, evaluated 118 market trends and technology trends, and identified 12 key trends with direct relevance to active projects and organizational goals.

The three-tier process LIV implemented — scouting, evaluation, processing — separates the machine task from the human task in portfolio governance.

AI handles scouting: continuous monitoring of news reports, academic research, and patent databases. This removes coverage constraints and eliminates the gap between signal emergence and signal detection.

Domain experts handle evaluation: collaborative assessment of AI-surfaced signals to identify those with genuine portfolio implications. This concentrates human expertise where it creates the most governance value.

Key stakeholders across product development and strategy handle processing: investment decisions informed by evaluated signals, with technology portfolios built to track internal capability gaps against identified market trends. Relevant stakeholders from each business unit contribute to the evaluation, ensuring governance decisions reflect expertise across the organization.

Portfolio outcomes from systematic governance

The governance framework Lear built creates a direct connection between external signal data and internal investment decisions.

When AI surfaces a technology signal, it moves through a structured evaluation process before reaching the governance board. Domain experts and product development team members assess relevance, competitive implications, and strategic fit. The governance committee receives a pre-evaluated signal with domain expert judgment already applied.

This structure addresses opinion slack directly. External data and internal expertise combine at the governance decision point rather than competing for influence.

John Absmeier, CTO of Lear Corporation, described the strategic objective: enhancing strategic alignment across business units and preemptively developing capabilities, products, and processes to continuously future-proof the business.

The governance structure ITONICS enabled makes that objective operational — turning signal volume into project portfolio management decisions rather than noise.

Implementing AI governance in your product development process

Building AI governance into the product development process requires six sequential steps. Each step builds the governance infrastructure that the next step depends on.

Step 1: Audit your current governance structure

Map every decision point in your current product development process: project approvals, gate reviews, resource allocation decisions, and kill reviews.

For each decision point, document three things:

-

what information the governance committee currently uses,

-

how old that information is at decision time,

-

and how long the decision process takes from trigger to outcome.

This business analysis of existing governance processes establishes the baseline. It identifies where review-cycle slack, opinion slack, and capacity slack accumulate — and where strategic alignment breaks down in your specific governance structure.

Step 2: Define what triggers a governance decision

Establish a signal library: the specific conditions that should trigger a governance action before the next scheduled review.

Trigger conditions include project health scores dropping below defined thresholds, external market trends data affecting a target opportunity, resource consumption exceeding planned rates by more than 15%, and regulatory signals changing compliance requirements for active projects.

Each trigger connects to a specific governance action: a kill review, a resource allocation decision, a scope adjustment, or an acceleration. Document these in a portfolio governance management plan so project managers and product development teams know what governance response each trigger requires.

Step 3: Connect external signal feeds to internal portfolio data

Link external data sources to the portfolio governance system. Patent databases, market research feeds, regulatory monitoring services, and competitor intelligence sources feed into the governance platform alongside internal project data.

Exhibit 10: ITONICS alert informing about an increase in the trend "Autonomous Networks"

Product development teams see internal project status and external market conditions in the same governance view. Investment decisions draw on both data streams simultaneously — eliminating the information boundary that separates internal portfolio management from external market reality.

Step 4: Establish AI-scored health criteria per project

Define the health scoring model for each project. Assign weights to strategic fit, milestone efficiency, market opportunity, and risk assessment dimensions based on each project's stage and type.

Projects targeting the development of innovative products may weigh strategic objectives and market signals more heavily, since market uncertainty is the primary portfolio risk. Incremental extensions of an existing product may weigh on project execution efficiency and milestone adherence.

Document the expected benefits each project must deliver at each governance gate. Key performance indicators for each dimension should be defined before the first AI-assisted review runs.

Step 5: Run your first AI-assisted portfolio review

Use the AI-scored health data, external signals, and scenario models in the next governance board meeting.

Leadership teams evaluate investment decisions against scored evidence. Portfolio managers facilitate the decision process using current data. Project managers prepare gate materials using live health data rather than manually compiled status reports.

The first AI-assisted review typically surfaces two to three portfolio issues that the previous manual review cycle had not identified. These early findings build committee confidence and validate the governance framework with leadership stakeholders.

Building a sound governance framework takes time, but the first AI-assisted review accelerates the learning curve significantly.

Step 6: Build governance accountability into the workflow

Establish a governance accountability framework. Each investment decision links to the data that informed it, the governance committee that made it, and the expected outcome it was designed to achieve.

Review accountability data at each subsequent governance meeting. The governance committee tracks outcomes against stated targets. The governance committee identifies where decision logic needs refinement based on outcome evidence.

This continuous improvement loop strengthens governance effectiveness and enables continuous improvement across review cycles. Organizations allocate resources more effectively in each subsequent cycle as decision quality improves.

Portfolio management activities become more precise as the governance structure learns from its own output — and organizational goals shift from activity management to strategic outcome delivery.

How AI-powered portfolio governance tools comply with security needs

Industrial companies managing sensitive product portfolios operate under strict data security requirements. IT security teams might express the biggest concerns with AI-powered portfolio governance and block their application.

Portfolio data — active project details, technology roadmaps, competitive positioning, and resource allocation plans — represents core intellectual property. Risk management for this data is a non-negotiable requirement for any AI-powered portfolio governance platform.

Exhibit 11: The ITONICS PRISM infrastructure and security layers

Data residency and sovereignty requirements

Portfolio governance platforms used in regulated markets must support data residency controls. Project data, signal feeds, and governance records need to remain within defined geographic boundaries to comply with data sovereignty regulations.

Enterprise-grade portfolio management tools support regional deployment options — EU, US, or Asia-Pacific data boundaries — giving governance committees the certainty that portfolio risks from data sovereignty compliance are addressed by design.

Role-based access control in governance structures

Portfolio governance involves multiple stakeholder groups with different information needs.

Project managers access their project data and milestone tracking. Portfolio managers access portfolio-wide health scores and external signals. The governance committee accesses decision-relevant summaries and scenario models. Executive leadership accesses strategic portfolio analytics and aggregate portfolio performance data.

Role-based access control ensures each stakeholder group sees only the information relevant to their governance function. Organizations with multiple project portfolios especially benefit from this access segmentation. Sensitive competitive intelligence can be restricted to specific governance bodies rather than distributed across the entire organization — protecting sensitive strategic information from unauthorized access.

Audit trails for governance decisions

Governance decisions affecting active R&D investments require full documentation: which data informed the decision, which governance board made it, what outcome was expected.

AI-powered portfolio governance platforms maintain complete audit trails for every governance action. Portfolio managers can demonstrate compliance with internal governance mechanisms and external regulatory requirements. Leadership teams can review past investment decisions against actual outcomes — creating the feedback loop that improves governance effectiveness over time.

Integration security with enterprise systems

Portfolio governance tools connect to enterprise systems: ERP platforms, project management systems, financial reporting tools, and external data feeds.

Enterprise-grade integration architecture uses authenticated API connections, encrypted data transport, and monitored access logs. Portfolio governance platforms in industrial environments typically support SSO integration with enterprise identity providers — ensuring access control aligns with corporate security policy rather than operating as a separate credential system.

AI model transparency and auditability

Product development teams need to understand how AI health scores and signal recommendations are generated. Opaque AI recommendations create governance risk: investment decisions made on outputs that the governance board cannot interrogate or challenge.

Mature portfolio management platforms provide explainable AI outputs. When a project health score changes, portfolio managers see which criteria drove the change and by how much. The governance committee can evaluate whether the AI assessment aligns with their judgment or warrants additional expert review — maintaining governance authority over every investment decision.

How ITONICS supports governance in industrial companies at scale

ITONICS provides the portfolio governance infrastructure that industrial product development teams need to move from periodic reviews to continuous, signal-based portfolio management.

The platform connects external signal monitoring, internal portfolio tracking, and governance workflow management in a single platform.

/Still%20images/Kanban%20Board%20Mockups%202025/capabilities-views-configure-cards-and-views.webp?width=2160&height=1350&name=capabilities-views-configure-cards-and-views.webp)

Exhibit 12: A project board across three innovation horizons and RAG categories

Covering idea generation and external market trends, portfolio managers access over 60 million signals scanned continuously — with AI filtering relevant signals to the portfolio domains that matter. Portfolio managers access health-scored portfolios, pre-modeled resource allocation scenarios, and pattern analysis across the full portfolio in one governance view, with complete audit trail documentation and access controls built in.

For industrial companies with demanding portfolio governance requirements, ITONICS supports role-based access control, regional data residency, enterprise SSO, and the explainable AI outputs that governance accountability requires.

Customers including Lear Corporation, Toyota Motor Europe, and SIEMENS use ITONICS to maximize strategic value from their portfolios — connecting external signal data to investment decisions across business units, geographies, and product domains.

FAQs on AI-driven product portfolio governance

What is product portfolio governance?

Product portfolio governance is the organizational structure, governance processes, and criteria that determine how a company makes decisions across its active projects.

It covers project approvals, resource allocation, gate reviews, and kill decisions. Effective portfolio governance aligns organizational strategy with portfolio investment — ensuring active projects reflect current strategic direction and help achieve strategic objectives rather than perpetuating outdated decisions.

How does AI improve portfolio management in industrial companies?

AI improves portfolio management by delivering continuous monitoring between scheduled review cycles.

Portfolio managers receive project health scores, external market trends data, and resource allocation scenarios in real time.

The governance committee makes informed decisions on current data rather than information prepared weeks earlier.

The governance structure operates on conditions rather than calendars — which is what continuous strategic alignment requires.

What is fake door testing, and when does it work?

What is R&D slack and how does AI reduce it?

R&D slack is the cumulative resource waste that accumulates when portfolio governance operates too slowly to catch misaligned projects. It includes review-cycle slack, opinion slack, and capacity slack. AI reduces R&D slack by providing continuous health monitoring, external signal feeds, and scenario-based reallocation tools — enabling portfolio management that is faster and better-informed across the new product development process.

How does the portfolio manager's role change with AI-enabled governance?

The role of portfolio managers shifts from data aggregation to decision facilitation. AI handles continuous monitoring and health scoring across active projects.

Portfolio managers focus on interpreting signals, facilitating governance decisions, and managing portfolio-level implications.

The portfolio management office becomes a strategic function — contributing strategic thinking to governance decisions rather than logistics to governance preparation.

What security requirements do AI portfolio governance tools need to meet?

Industrial companies require data residency controls, role-based access control, complete audit trails, secure enterprise system integration, and explainable AI outputs.

Risk management for sensitive intellectual property — including technology roadmaps, competitive positioning, and resource allocation plans — must be built into the platform architecture.

The governance committee needs to interrogate AI recommendations, not simply accept them as portfolio management outputs.

How long does it take to implement AI-enabled product portfolio governance?

Implementation follows six sequential steps:

-

governance audit,

-

trigger definition,

-

external signal integration,

-

health criteria setup,

-

first AI-assisted review,

-

and accountability framework deployment.

Industrial companies with existing portfolio management infrastructure typically complete initial implementation in 12 to 16 weeks. Full governance maturity develops over the first two to three governance cycles following deployment.

.webp?width=1668&height=924&name=The%20Cost%20Breakdown%20of%20Delayed%20Visibility%20in%2050M%20Portfolios%20(and%20the%208%20SPM%20AI%20Use%20Cases).webp)

/Still%20images/Workflow%20Builder%20Mockups%202025/portfolio-use-approval-workflows-2025.webp?width=2160&height=1350&name=portfolio-use-approval-workflows-2025.webp)

/Still%20images/Workflow%20Builder%20Mockups%202025/portfolio-audit-trails-and-alerts-2025.webp?width=2160&height=1350&name=portfolio-audit-trails-and-alerts-2025.webp)

/Still%20images/Element%20Mockups%202025/foresight-stay-in-the-loop-2025.webp?width=2160&height=1350&name=foresight-stay-in-the-loop-2025.webp)